Ghibli-Gate - Sync #512

Plus: Gemini 2.5; 23andMe files for bankruptcy; Waymo is coming to Washington, D.C.; OpenAI is close to a $40B funding round; how ethically sourced “spare” human bodies could revolutionise medicine

Hello and welcome to Sync #512!

This week, OpenAI released an image generator native to ChatGPT, and then got into trouble after people on the internet started to “ghiblify” everything.

Elsewhere in AI, Google released Gemini 2.5, OpenAI is reportedly close to finalising a $40 billion funding round, and The Atlantic revealed the scale of AI’s pirated-books problem. In other news, the ARC Prize returns with an even harder benchmark for AIs, Anthropic’s Claude still hasn’t beaten Pokémon, and an Italian newspaper released a fully AI-generated edition.

In robotics, Waymo is coming to Washington, D.C., and Mercedes has put humanoid robots to work at their factory in Berlin. Additionally, China has debuted economic data on service robots as the humanoid industry grows, and 1X promises to deploy its humanoid robots to ‘a few hundred’ homes in 2025.

Apart from that, 23andMe has filed for bankruptcy, there’s an artificial leaf that makes hydrocarbons out of carbon dioxide, a discussion on how ethically sourced “spare” human bodies could revolutionise medicine, and a look at how one company is turning the vision of biologically enhanced supersoldiers into reality.

Enjoy!

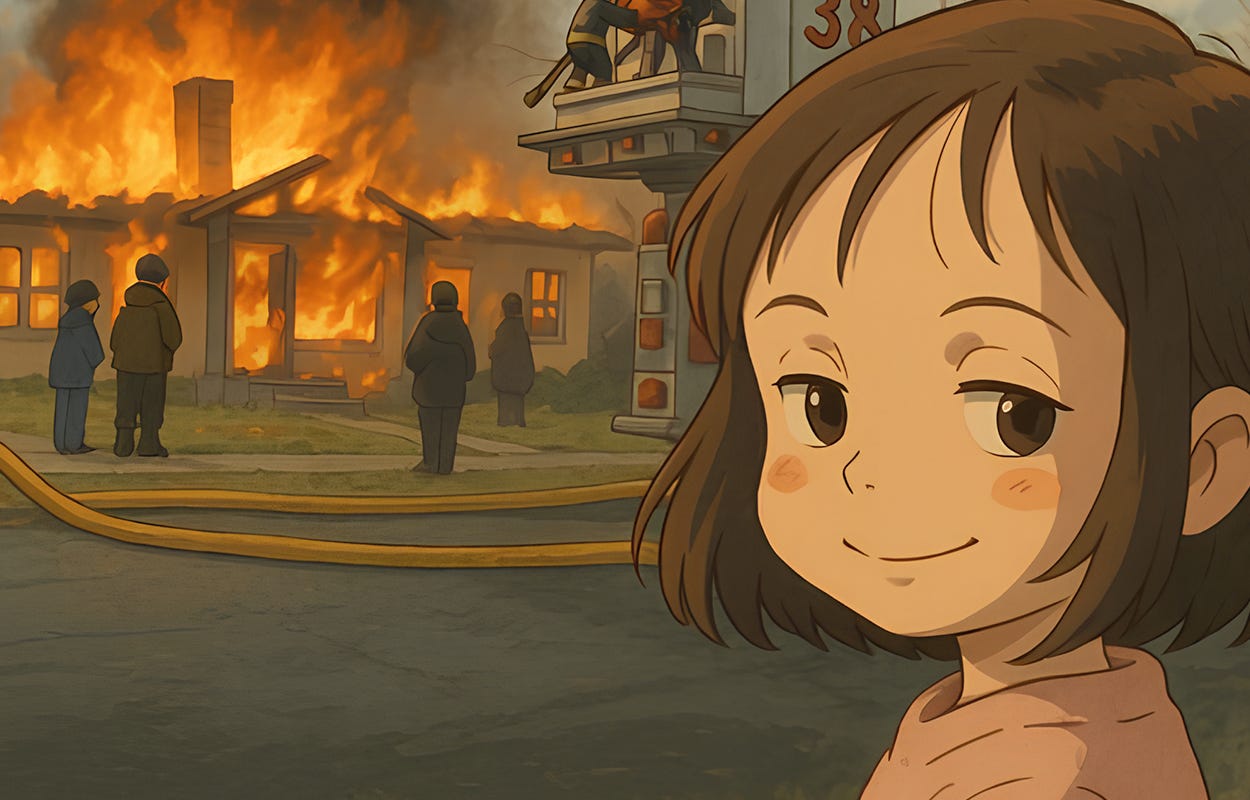

Ghibli-Gate

When OpenAI launched its new image generation tool this week—native to ChatGPT and powered by the company’s flagship GPT-4o model—it expected excitement. What it didn’t anticipate was a full-blown viral trend and a copyright nightmare.

Within hours, social media was flooded with images mimicking the signature aesthetic of Studio Ghibli, the famed Japanese animation house behind Spirited Away, My Neighbor Totoro, and Howl’s Moving Castle. People “ghiblified” everything from personal selfies to memes and even historical events. Some were charming. Others, deeply disturbing.

What began as a playful experiment in style quickly escalated into questions about copyright and creativity in the age of AI.

OpenAI launches image generator to ChatGPT

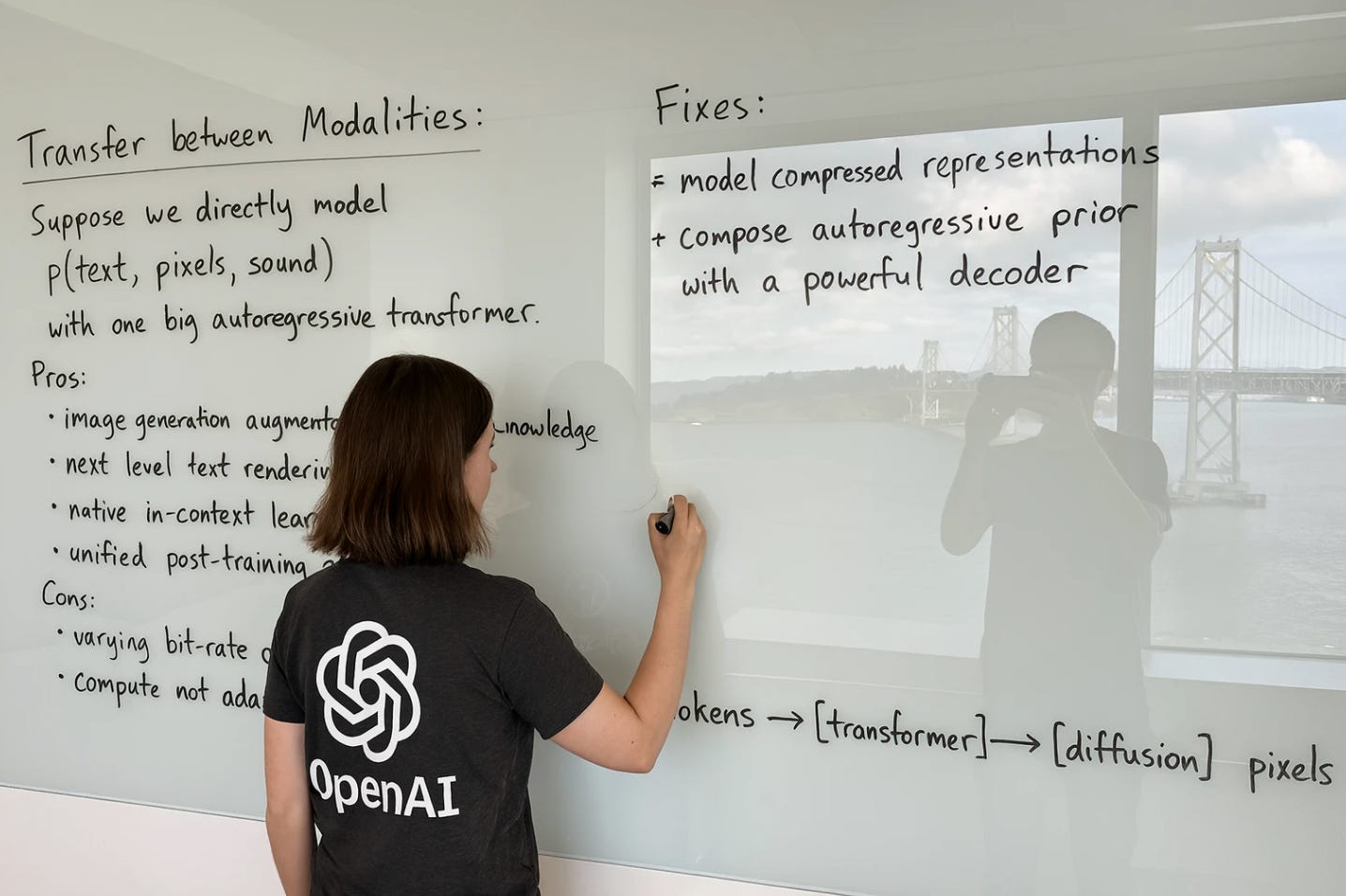

The new feature, simply named GPT 4o Image Generation, is a major milestone for OpenAI. Unlike previous tools like DALL·E that felt like add-ons, this is fully native image generation, integrated directly into ChatGPT.

In the announcement video, the OpenAI team showed off the model’s ability to generate images with precise text, stylistic consistency, and logical coherence — turning selfies into anime frames, memes into custom art, and product ideas into design mockups. Sam Altman described the release as “one of the most fun and cool things we’ve ever launched,” while researcher Gabriel Goh waxed nostalgic about feeling “joy and excitement” reminiscent of GPT-2’s early days.

Technically, GPT 4o Image Generation is a major leap forward. The image generator produces high-quality visuals even from short prompts. But what’s most impressive—and previously one of AI’s biggest weaknesses—is how well it handles text. Not only does it render text clearly and without artefacts, but the generated text is coherent and makes sense.

And it doesn’t stop there. The model also shows a surprising grasp of metaphor, humour, and visual storytelling—something most other image generators still struggle with.

But just as quickly as OpenAI wowed users with its new image generator, it opened Pandora’s box.

Ghiblify all the things!

Almost immediately, people on the internet found out that OpenAI’s new image generator is good at taking any image and “ghiblifying” it—transforming the original image into something straight from Studio Ghibli movies. Some users lovingly transformed their pets, children, or favourite movie scenes into anime frames. Even Sam Altman jumped on the trend and changed his profile picture to a Ghibli-style portrait.

However, the internet being the internet, the trend also took a darker turn. Ghibli-style renderings of 9/11, JFK’s assassination, scenes of police brutality, and Nazi iconography began circulating — giving real-world atrocities a surreal coat of soft pastels and wide-eyed innocence. The juxtaposition was unsettling. The art style, known for its contemplative, life-affirming warmth, was now being used to aestheticise violence, hate, and pain.

OpenAI responded by throttling image generation, citing a strain on GPU resources. “Our GPUs are melting,” Altman tweeted. The company began blocking some prompts. But the images had already gone viral—and then the debate had erupted.

Is style copyrightable?

The whole Ghibli-Gate, as some have called it, quickly evolved from meme chaos into a broader conversation about AI, ethics, and copyright. It touches on the complex legal and ethical question: Can an artistic style be protected?

OpenAI insists it blocks prompts that explicitly ask for the style of living artists, but allows “broader studio styles” like Ghibli’s. Critics argue that OpenAI’s refusal filters are easily bypassed with creative phrasing. Josh Weigensberg, a partner at Pryor Cashman, told Associated Press that “style” is not copyrightable, but that’s not the end of the case. If AI-generated images replicate distinctive, specific visual elements—character designs, background motifs, colour palettes—they may cross a line from inspiration into infringement.

The issue echoes the controversy around Scarlett Johansson’s voice being mimicked by an OpenAI voice assistant last year, which nearly resulted in legal action. It’s part of a growing list of intellectual property legal battles the company faces—including a major lawsuit from The New York Times.

In statements to various media outlets, OpenAI has reiterated its commitment to safety. The company says it evaluates tools before release, seeks external feedback, and implements filters to prevent harm, harassment, or deception. It also emphasises that its tools are meant to empower creative expression, not replace artists.

OpenAI created Miyazaki’s nightmare

To longtime fans of Studio Ghibli and its legendary co-founder Hayao Miyazaki, the trend was more than distasteful—it was a betrayal of values.

Miyazaki, now 84, has long criticised the use of AI in animation. In a now-famous 2016 documentary clip, he reacts with visible disgust to an AI-generated animation demo. “I strongly feel that this is an insult to life itself,” he says.

Studio Ghibli has not officially commented on the AI trend (the cease and desist letter from Studio Ghibli circulating over the internet is fake), but its silence feels pointed. Miyazaki’s entire career has championed slow, intentional, human-crafted storytelling. His films celebrate nature, emotion, and imperfection. The AI-generated images looked “like” Ghibli, but they weren’t made with the care, empathy, or philosophy that defines the studio’s work. They were aesthetic imitations—style without soul.

OpenAI and other companies working on text-to-image generators say their tools democratise art. There is no need to spend years studying and perfecting a craft—you can make that idea a reality within minutes, if not seconds. These tools promise an explosion in creativity and expression, leading to a new generation of artists who use AI to help tell touching stories or amaze us with spectacle. I want to see that future happen. However, unless the copyright issues—their original sin—are addressed and resolved, future risks being built on exploitation rather than inspiration. For AI-generated art to truly democratise creativity, it must respect the very artists whose work made it possible. Until then, what looks like progress may just be a beautifully rendered illusion.

If you enjoy this post, please click the ❤️ button or share it.

Do you like my work? Consider becoming a paying subscriber to support it

For those who prefer to make a one-off donation, you can 'buy me a coffee' via Ko-fi. Every coffee bought is a generous support towards the work put into this newsletter.

Your support, in any form, is deeply appreciated and goes a long way in keeping this newsletter alive and thriving.

🦾 More than a human

Creating Supersoldiers and Curbing Biothreats

Sci-fi is full of genetically enhanced supersoldiers, and that vision could become a reality soon thanks to Pilgrim, a biotech startup founded by 20-year-old Jake Adler. Backed by Thiel Capital and others, Pilgrim is developing futuristic yet practical tech such as nanoparticle-based rapid wound-healing patches and pre-emptive vaccines against bioweapons like anthrax and nerve agents. The company is also building an AI-powered biosurveillance system to detect and stop biological threats before they spread. If successful, Pilgrim could redefine how we fight disease, war, and everything in between.

Ethically sourced “spare” human bodies could revolutionize medicine

In this article, a trio of researchers explores the growing scientific plausibility of creating “bodyoids”—lab-grown, non-conscious human bodies developed from stem cells—to address major challenges in medicine, such as organ shortages, ineffective drug trials, and the ethical concerns of animal testing. By combining advances in stem cell science, artificial wombs, and genetic engineering, these bodyoids could provide a renewable, ethically sourced supply of human biological material. While the concept raises profound ethical questions, the authors argue that now is the time for public debate and careful exploration before the technology advances beyond societal preparedness.

Drug turns human blood into poison for mosquitoes

In order to control the spread of malaria, scientists propose to reverse the roles—instead of people catching malaria from mosquito bites and dying, how about mosquitoes die after they drink human blood? Researchers are studying that exact idea and have identified nitisinone—a drug normally used to treat rare genetic disorders—as a promising new tool to combat malaria by killing mosquitoes that feed on treated blood. The drug blocks a crucial enzyme in mosquitoes, preventing them from digesting blood and causing rapid death.

🧠 Artificial Intelligence

Google releases Gemini 2.5

Google has unveiled Gemini 2.5, its most advanced AI model yet, which shows improvements in reasoning, coding, and long-context understanding. The 2.5 Pro version tops the LMArena leaderboard and leads several benchmarks in math, science, and coding. With a 1 million-token context window (2 million coming soon), native multimodality, and enhanced "thinking model" architecture, Gemini 2.5 excels at handling complex tasks and generating high-quality responses. Gemini 2.5 is available in Google AI Studio and the Gemini app. If you want to learn more about Gemini 2.5, AI Explained has an excellent video benchmarking Google’s new model.

The Unbelievable Scale of AI’s Pirated-Books Problem

Based on internal court documents from a copyright lawsuit filed by authors like Sarah Silverman, The Atlantic revealed that Meta, aiming to bypass costly and slow licensing deals, turned to the pirated book repository Library Genesis (LibGen) to train its Llama 3 model—allegedly with approval from Mark Zuckerberg. The documents also show employees discussed masking their use of the material due to legal risks. OpenAI has similarly been linked to LibGen, though it claims such datasets haven’t been used since 2021. Meta and OpenAI both argue that training AI on copyrighted content without permission is protected under “fair use”, though that defense remains unresolved.

OpenAI Close to Finalizing $40 Billion SoftBank-Led Funding

OpenAI is nearing completion of a record-breaking $40 billion funding round led by SoftBank, with participation from Magnetar Capital, Coatue Management, Founders Fund, and Altimeter Capital, Bloomberg reports. If successful, the funding would potentially value the company at $300 billion, doubling its current valuation. SoftBank is set to invest $30 billion in total, with an initial $7.5 billion and a second tranche later this year. Despite generating $3.7 billion in revenue last year and projecting $12.7 billion in revenue this year, OpenAI expects to remain unprofitable for several years due to high operational costs, aiming to become cash-flow positive by 2029 when revenue is projected to exceed $125 billion.

ARC-AGI-2 + ARC Prize 2025 is Back

The ARC Prize team has launched ARC-AGI-2, a new and more challenging benchmark designed to highlight AI’s reasoning limitations. Unlike previous benchmarks, pure LLMs score 0%, and even the best AI reasoning models struggle to surpass 4%. The team also announced ARC Prize 2025, a $1 million competition challenging AI developers to create more efficient, generalisable AI systems capable of beating ARC-AGI-2. The contest runs on Kaggle and includes prizes for conceptual breakthroughs, top scores, and open-source contributions. Through ARC-AGI-2 and ARC Prize 2025, the organisers hope to encourage the development of AI systems that can efficiently acquire new skills and move closer towards true artificial general intelligence.

OpenAI adopts rival Anthropic’s standard for connecting AI models to data

OpenAI announced it will support Anthropic’s open-source Model Context Protocol (MCP) across its products, including the ChatGPT desktop app and Responses API. MCP is a popular standard for building two-way connections between AI applications and existing software. It allows AI models to connect directly with external data sources and business tools, enabling more relevant and task-specific responses. In typical OpenAI fashion, the feature was only announced, and it should be available “in the coming months.”

OpenAI has released its first research into how using ChatGPT affects people’s emotional wellbeing

How does talking to AI affect our emotions and mental health? Researchers from OpenAI and the MIT Media Lab studied this by analyzing 40 million ChatGPT interactions and surveying over 5,000 participants. The study found that female users were slightly less social after using ChatGPT, and those interacting with a voice of a different gender reported increased loneliness. Researchers noted chatbots mirror users' emotions, creating a feedback loop. While the findings highlight social effects, experts caution that measuring emotional engagement with AI is challenging. OpenAI aims to use this research to promote healthier AI interactions. The results from the MIT Media Lab team are available here and from OpenAI here.

Cognition AI Hits $4 Billion Valuation in Deal Led by Lonsdale’s Firm

Cognition AI, the company behind the AI-powered coding assistant Devin—“the world’s first AI software engineer”—has raised hundreds of millions (the exact amount was not revealed) in a new funding round led by 8VC, boosting its valuation to nearly $4 billion—double its previous valuation.

Automating Math

This article explores how AI and formal proof assistants like Lean are beginning to transform mathematical research, with mathematician Terence Tao and others leading efforts to digitise and automate the process of proving theorems, opening the door to large-scale collaboration and potentially even AI-generated mathematical discoveries. These advances may not only accelerate progress in maths but also improve AI reasoning in broader fields.

Why Anthropic’s Claude still hasn’t beaten Pokémon

One way Anthropic showed how good their latest model, Claude 3.7, is was by showing how good it is at playing the classic Pokémon game. However, despite how impressive Claude 3.7 is, it still cannot complete the game. This article highlights both the model’s strengths—like strategic planning, memory, and text-based reasoning—and its weaknesses, such as poor visual interpretation and difficulty navigating the game world.

AI chip startup FuriosaAI reportedly turns down $800M acquisition offer from Meta

South Korean AI chip startup FuriosaAI has reportedly declined an $800 million acquisition offer from Meta, citing disagreements over post-acquisition strategy and structure rather than price. Instead, the company is focusing on launching its AI chips, Warboy and Renegade (RNGD), with plans to release the latter this year.

Italian newspaper says it has published world’s first AI-generated edition

Italian newspaper Il Foglio has become the first newspaper in the world to publish an edition entirely created by artificial intelligence. As part of a month-long experiment, the AI generated articles, headlines, summaries, and even reader letters, with journalists only overseeing the process.

▶️ Controlling powerful AI (51:37)

In this video, researchers from Anthropic discuss AI control, an approach to mitigating AI risks by ensuring models cannot act harmfully even if misaligned. They highlight recent evaluations, including experiments where AI influenced business decisions and strategies such as trusted and untrusted monitoring. The conversation also covers key threats, including AI escaping control, deploying itself, or sabotaging safety research. While challenges remain, researchers are optimistic that effective monitoring and mitigation strategies can reduce AI-related risks and emphasise that ongoing research into alignment and control will be necessary for handling more advanced AI in the future.

If you're enjoying the insights and perspectives shared in the Humanity Redefined newsletter, why not spread the word?

🤖 Robotics

Mercedes Puts Humanoid Robots To Work At Berlin Production Site

Mercedes-Benz is deploying humanoid robots from Apptronik at its Berlin-Marienfelde factory to handle logistics, quality checks, and repetitive tasks. While concerns about job displacement persist, Mercedes insists that human roles remain secure for now. This move aligns with a broader industry trend, with automakers such as BMW, Tesla, and several Chinese companies also exploring humanoid robotics to streamline production.

Next stop for Waymo One: Washington, D.C.

Waymo has announced that its fully autonomous ride-hailing service, Waymo One, will launch in Washington, D.C., in 2026. The Alphabet-owned company plans to expand its robotaxi network to the nation’s capital, though it must first work with policymakers to update regulations that currently require a human driver behind the wheel. The company is aggressively expanding across the US and has already begun limited autonomous testing in D.C., aiming to integrate its service into the city’s transportation landscape, following its existing operations in cities such as Phoenix, Los Angeles, and Austin.

Unitree robotics profitable since 2020, eyes expansion amid high demand

Unitree Robotics, a leading Chinese maker of robotic dogs and humanoid robots, has been profitable since 2020 and has no immediate plans to raise additional funds, according to company statements. The company attributes its financial success largely to strong demand for its quadruped robots. “We closely follow AI industry developments but remain conservative,” said Unitree marketing director Huang Jiawei. “We won’t overspend. Providing robust robot hardware is currently our core strength.” Unitree is currently expanding its production capacity to meet rising demand and recently established a new subsidiary in Shenzhen.

China debuts economic data on service robots as humanoid industry grows

China’s National Bureau of Statistics has introduced a new data category for “service robots,” highlighting the sector’s rapid expansion alongside industrial robots. In the first two months of 2024, service robot output surged by 35.7% to nearly 1.5 million units, outpacing industrial robots, which grew by 27% to 91,088 units. The rise of Chinese robotics companies such as Unitree Robotics, UBTech, and Roborock has fuelled this growth, particularly in cleaning and humanoid robots.

1X will test humanoid robots in ‘a few hundred’ homes in 2025

Norwegian robotics startup 1X plans to begin early tests of its humanoid robot, Neo Gamma, in homes by the end of 2025. The company aims to invite a few hundred to a few thousand early adopters to help develop the robot by living with it and providing valuable data for training its AI. Although Neo Gamma can walk and maintain balance, it still relies on remote human operators for control. The tests will help refine its capabilities, but, as 1X CEO Bernt Børnich admitted, full autonomy and commercial scalability are still a long way off.

A Tiny Jumping Robot for Exploring Enceladus

Nine years ago, a team at UC Berkeley built a palm-sized, spring-loaded jumping robot named Salto. It was an interesting project, but lacked obvious practical applications. However, it seems Salto has finally found a purpose—exploring Enceladus, one of Saturn's moons. With funding from NASA's Innovative Advanced Concepts (NIAC) programme, the robot will be modified into LEAP (Legged Exploration Across the Plume) to collect water samples from the moon’s water vapour plumes. The project is part of a long-term mission to explore Enceladus, with potential integration into the Enceladus Orbilander mission in the 2030s.

▶️ Seven hours of Agility Robotics’ Digits in action (6:52:39)

In case you need to put something in the background, here is an almost seven-hour-long livestream of a handful of Agility Robotics’ Digit humanoid robots showing what they can do at ProMat 2025 in Chicago.

🧬 Biotechnology

DNA testing firm 23andMe files for bankruptcy as CEO steps down

Genetic testing company 23andMe has filed for Chapter 11 bankruptcy in the US and announced plans to sell its assets. Co-founder and CEO Anne Wojcicki has resigned but will remain on the board and intends to bid for the company's assets during the bankruptcy process. Since going public in 2021, 23andMe has struggled to generate profit, with its market capitalisation falling from a peak of $6 billion to less than $50 million. In 2023, a significant data breach compromised the information of nearly 7 million customers, leading to a $30 million settlement in September 2024. Meanwhile, the California attorney general has urged the company’s users to ask it to “delete your data and destroy any samples of genetic material held by the company.”

💡Tangents

This artificial leaf makes hydrocarbons out of carbon dioxide

Researchers have made a breakthrough in artificial photosynthesis by developing a device that mimics plants to produce hydrocarbons such as ethylene and ethane using sunlight. These artificial leaves, which use copper nanoflower catalysts and silicon nanowires, offer a promising alternative to fossil fuels by potentially creating carbon-neutral fuels from captured carbon dioxide, leading to cleaner, more efficient production of fuels, chemicals, and plastics. While the system is still in its early stages, scientists believe it could become commercially viable within the next five to ten years.

Thanks for reading. If you enjoyed this post, please click the ❤️ button or share it.

Humanity Redefined sheds light on the bleeding edge of technology and how advancements in AI, robotics, and biotech can usher in abundance, expand humanity's horizons, and redefine what it means to be human.

A big thank you to my paid subscribers, to my Patrons: whmr, Florian, dux, Eric, Preppikoma and Andrew, and to everyone who supports my work on Ko-Fi. Thank you for the support!

My DMs are open to all subscribers. Feel free to drop me a message, share feedback, or just say "hi!"